In Brief:

For decades, artificial intelligence (AI) has mostly lived in the realm of science fiction. Now, it’s everywhere. AI assistants Siri and Bixby greet users in the morning, tools like Grammarly use natural language processing to help users minimize errors in their writing and Google has begun to incorporate generative AI into their search experience. With AI present in so many different aspects of our daily lives, it only makes sense that it's also present in state and local policymaking.

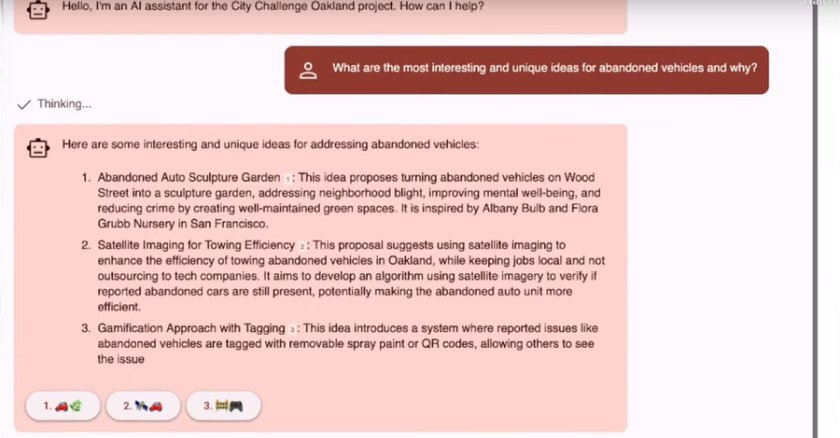

AI is actually “narrow artificial intelligence,” where computers display human(oid) capabilities in restricted ways. They have specific tasks — data analysis, voice recognition and classifying images — in order to answer questions, compile summaries and increase overall efficiency. When it comes to policymaking, one of the primary ways it can help is with the organization and analysis of the massive amounts of data states, counties and cities collect every day.

“AI has made its way into various aspects of government operations. However, we are at an early stage regarding AI's integration in policymaking,” says Michael J. Ahn, associate professor at John W. McCormack Graduate School at the University of Massachusetts Boston. “We are in the process of understanding its full potential, developing regulatory frameworks and identifying methods for incorporating AI into government functions and policymaking. Additionally, we are engaged in deciding on critical issues encompassing data privacy, security and the establishment of ethical guidelines for utilizing AI in policymaking.”

By the end of the 2022 legislative session, the Electronic Privacy Information Center (EPIC) reported that seven states had introduced and passed legislation that either regulates artificial intelligence or pulls together committees that can seek transparency around the use of AI in state and local governments. This legislation ranges from Alabama’s bill to limit the use of facial recognition and keep AI from being the only basis for an arrest to Mississippi’s legislation directing the state’s Department of Education to create and implement a computer science curriculum that includes teaching students about AI.

Does AI Have a Future in Policymaking?

The short answer is that AI will be present in policymaking as more and more agencies utilize the technology in their data analysis and other operations. As a developing technology, it has a near-endless potential for data analysis and distillation. The long answer is less clearly defined.

The future of AI as a policymaking tool will require attention to major concerns around its use. One worry with current AI is the fact that OpenAI, the company that selects the data ChatGPT is trained on, used a wide range of text including books, articles and online content. Like many other AI models and their bots, what gets fed into the model informs the stances it repeatedly takes, the material it develops and the biases it generates.

As Michael Ahn and Yu-Che Chen of Brookings Institution point out, in the future, individuals could create and train their own ChatGPTs using specialized field data. As it relates to policymaking, this could mean AI that was trained on a closed system of data relative to a specific agency’s input and output would be able to effectively produce drafts of documents based solely on material void of outside material. It could minimize the number of errors, bias and “hallucinations” present in generated text as it can only look at what it’s been trained on and not the wider repository of text and data other AI systems are trained on.

Another big concern with AI in policymaking and beyond is the potential for the software to replace humans at their jobs, rather than being used as a tool to supplement their labor. Right now, AI technologies aren’t at the level of being able to perfectly mimic long-form human writing consistently. Human input — generating ideas, editing text, fact checking information — is necessary to keep AI-generated text accurate and to function well. As useful as AI text generation is, the technology has limits that only humans can make up for and those limits are well-known by experts in the field.

What Happens Next

When it comes to developing best practices for the use of AI in policymaking, there are several things to keep in mind as government agencies assess its capabilities and limits.

First is transparency. Agencies should develop a consistent policy about how AI can and cannot be used in the process of developing materials around policy. If you have a concrete policy about AI — even if that policy bans certain kinds of applications like using ChatGPT to draft emails or reports — you’ll be able to manage and standardize expectations for the day-to-day uses of new or unfamiliar technology within your specific agency.

Second is leveraging expertise and forging connections with people who understand AI as a developing technology and can distill that information to an audience of early adopters. Similar to the development of regional collaboration between cities, counties and local universities, regional collaborations with AI in the same vein could create an on-ramp to better data-driven policy.

Third is building an awareness that anything created, analyzed and distilled by AI should be monitored for so-called “hallucinations” and other errors, including those formed by biases built in as a result of the material the system is trained on. Hallucinations in particular are important to look out for. They occur when AI gives false or misleading answers to questions, not the facts of a situation. One well-known occurrence happened when AI generated legal cases and decisions that did not exist. Everything created by AI should be meticulously fact checked and then edited in order to minimize the chance of hallucinations that would undermine the process of policymaking.

Finally, account for the potential for inequity from day one. Depending on the region, especially rural areas, they may not have the opportunity or the robust data to tap into AI tools to develop effective policies, compared to more economically affluent jurisdictions.

“Officials who do not have access to [developing AI technologies] may, as a result, have less responsive policy to their constituencies. They may be less likely, for instance, to use the best scientific and empirical information out there as they respond to things like environmental change problems or infrastructure degradation,” says Daniel Max Crowley, professor of human development, family studies and public policy at Pennsylvania State University. “These are types of things that an AI could quickly look at — local resources and constituent feedback — and prioritize, for instance, certain things in a way that could otherwise take a year of internal analysis.”

Crowley suggests that intermediary groups or connecting organizations that support, for example, small rural municipalities, can work together to collectively bargain, purchase and research the kinds of products that make artificial intelligence and policymaking possible for larger and more affluent cities and counties.

As with many other tools that we use in governing, AI can be useful to supplement what appointed and elected officials already use when it comes to analyzing data and crafting policy. However, just like with cracking open a new power tool, it’s important to understand how to use it and when. Developing early best practices and communicating with experts at every step of the way will help policymakers utilize AI efficiently, rather than keeping it in the metaphorical policymaking toolbox gathering dust.